Dr Keith Grimes, Founder & CEO, Curistica

In June 2024, ransomware crippled Synnovis, the pathology provider for King's College Hospital, Guy's and St Thomas', and the Evelina. Operations were cancelled, transfusion stocks were rationed, and clinicians worked without the routine results they rely on. A patient subsequently died, with the cyber attack cited as a contributing factor in the harm review. That single line is the cleanest answer I can offer to anyone still arguing that cybersecurity sits outside the clinical safety officer's remit.

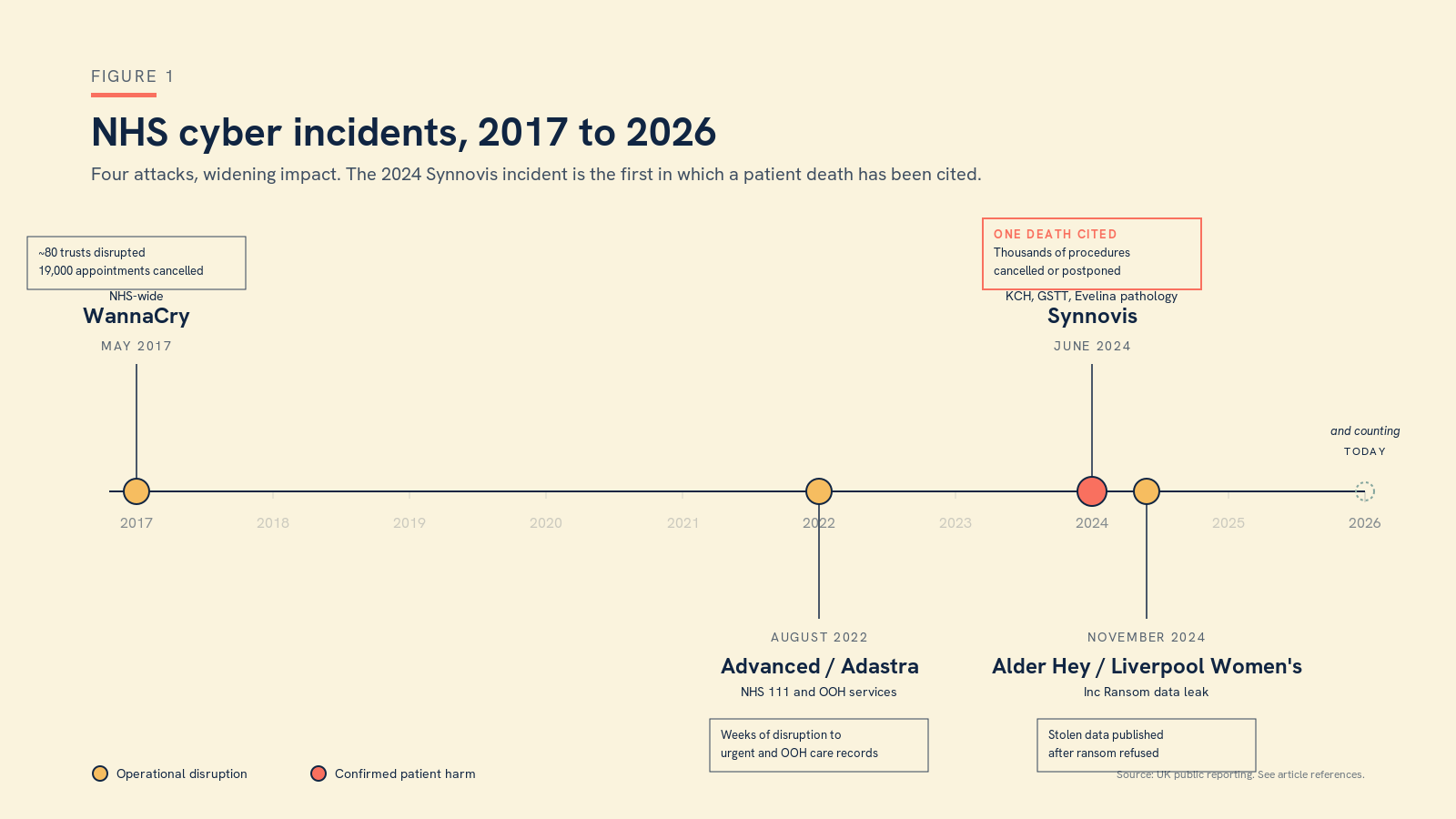

I was reminded of the Synnovis case last week listening to Annabelle Painter on the RSM Digital Health Section podcast, making the case that cybersecurity is a clinical risk in its own right. She is right, and the evidence is no longer in short supply. WannaCry in May 2017 was the wake-up call most of us still remember. The Advanced/Adastra ransomware attack in August 2022 took out the patient records system used across NHS 111 and out of hours services for weeks. Inc Ransom went after Alder Hey and Liverpool Women's in November 2024 and dumped stolen data when the trusts refused to pay. Synnovis put the cost in lives. None of this is theoretical, and none of it should be filed as somebody else's problem.

Figure 1. Four NHS cyber incidents in under a decade. Synnovis is the first in which a patient death has been cited.

What is changing now is the speed at which the threat curve is bending. The AI Safety Institute's recent evaluation of Claude's Mythos cybersecurity features makes for uncomfortable reading. Frontier models are getting genuinely capable at the kind of multi-step, autonomous offensive work that used to require an experienced human operator. The capability uplift is real, it is asymmetric, and it is widely available. If your threat model still assumes attackers move at human speed and pay human wages, it is already out of date.

There is, against that, a reason for cautious optimism, and it is the bit that gets less airtime than it deserves. Anthropic's Project Glasswing is a deliberate effort to put advanced AI tooling into the hands of the defender community first, in partnership with serious cybersecurity firms. It is the closest thing the field has had in a long time to a deliberate head start for the good guys. The shape of the next decade depends on whether defenders can stay a useful step ahead of attackers, and frontier labs choosing sides matters more than is often acknowledged. I take it as a hopeful signal.

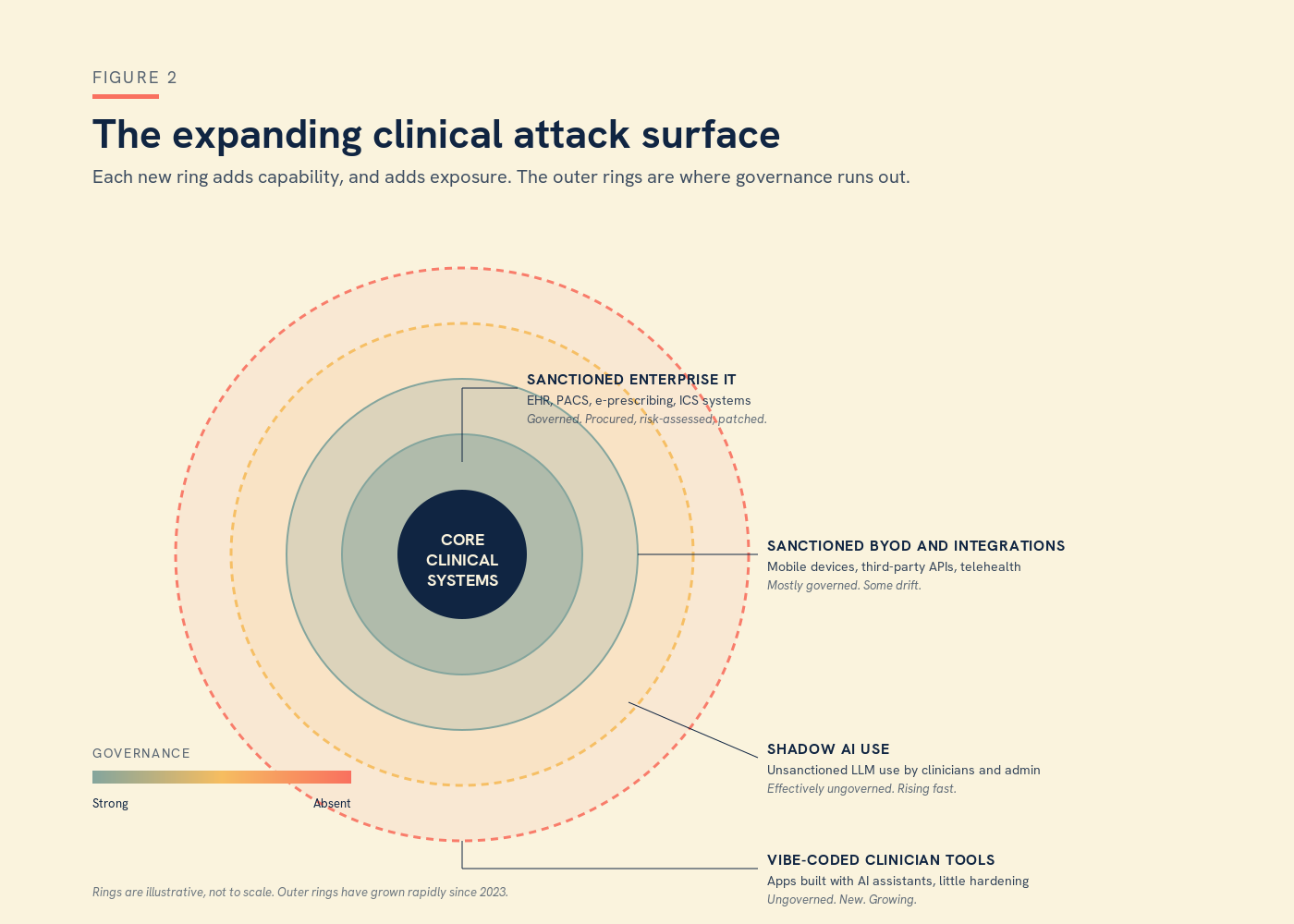

But none of that addresses the threat that is already inside our walls, and that I think the clinical safety community is least prepared for. Vibe coding, by which I mean the use of AI assistants to write working software with little or no formal engineering background, has put production-quality apps within reach of every clinician with an idea and a free afternoon. Some of what is being built is genuinely useful. Almost none of it has been hardened. The clinician who builds a triage chatbot over a weekend is unlikely to have thought through authentication, audit logging, data residency, dependency management, secrets handling, or what happens when the model provider deprecates an endpoint. Shadow AI use is rife, and prohibition is no longer realistic. Every trust IT team I speak to is quietly discovering tools they did not sanction, running on devices they did not issue, processing data they did not classify.

Each of those tools is an attack surface. Each of them is also an unmanaged clinical risk. The two are the same risk seen from different angles.

Figure 2. The clinical attack surface in 2026. The new outer rings, shadow AI and vibe-coded clinician tools, are growing faster than governance can keep up with.

The CSO role must professionalise around this

So here is the working argument for the clinical safety profession. Cybersecurity belongs on the CSO's desk, not as a specialism to be acquired, but as a working knowledge to be maintained. The CSO does not need to become the CISO, and probably should not try, but does need to know enough to ask the right questions, to scope a hazard properly, and to recognise when something is being underplayed. Cyber-related incidents belong in the hazard log, scored on patient impact like anything else, with controls and evidence in the usual columns. This is a critical, professional role, and it is time the clinical safety community treated it as one.

Business continuity needs an honest rethink

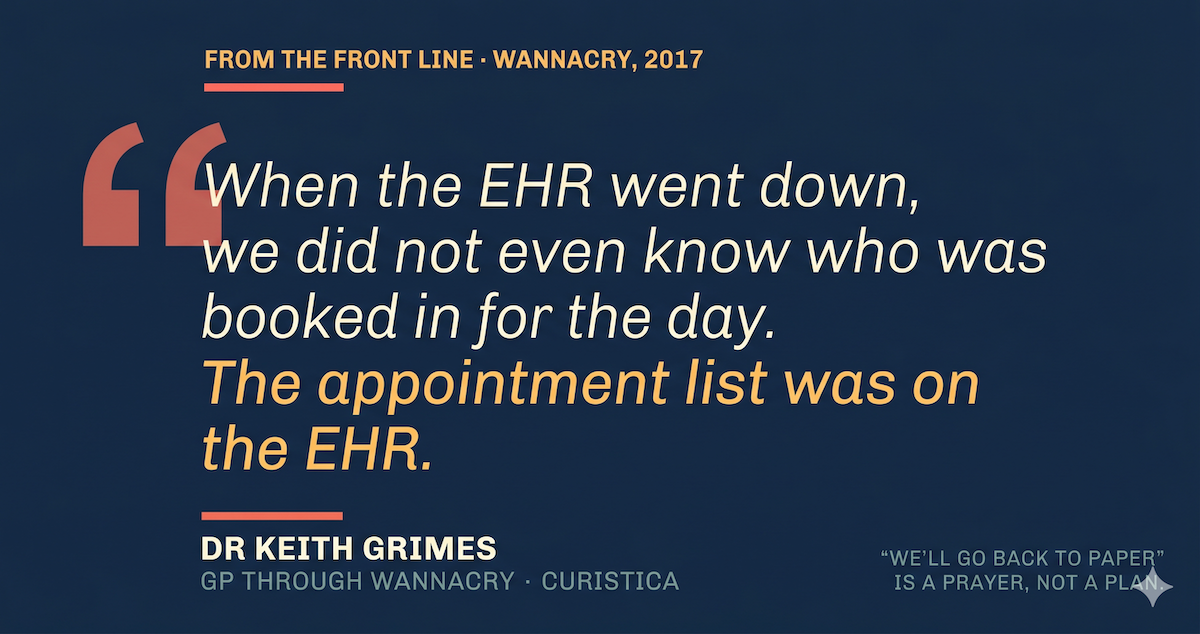

Business continuity planning needs an honest, urgent rethink. The comforting line in most plans is that the organisation will "revert to paper" when systems are down, and in my experience that line tends to be written by people who have never tried to do it. I was a GP through WannaCry, and when the EHR went down we did not even know who was booked in for the day, because the appointment list was on the EHR. That was not a failure of paper. It was a failure to notice that the thing we were proposing to fall back on was already wholly dependent on the thing that had just broken. Real business continuity starts from the assumption that core systems will be unavailable, and walks forward from there, step by step, asking what still works, what does not, and what the clinical implications of each gap actually are.

AI use policy written for the world as it is

The AI use policy needs an equally hard rethink. Shadow AI use is now the norm rather than the exception, and a policy that pretends otherwise is a policy that has already failed, even if it has not yet failed on paper. Pragmatic policy is written for the world as it is, not the one we wish we had. It assumes use, sets safe defaults, names approved tools and patterns, and gives clinicians who want to build something new a clear, supported route in. Blanket bans push the activity further into the shadows, where it cannot be seen, supported, or assured, and where the next breach will quietly originate.

And the compliance picture, in which DCB0129, DCB0160, the DSP Toolkit, DTAC, NIS2, and the various cyber assurance frameworks all overlap and occasionally contradict, needs deliberate expert input rather than a hopeful glance once a year.

What this looks like in practice

In practical terms, that means a CSO who can read a penetration test report, who can sit in the same room as a CISO without translation, who knows what a secure software development lifecycle should look like even if they do not write the code themselves, and who treats every new AI deployment as a hazard identification exercise as much as a procurement one. It also means a profession willing to professionalise around this responsibility, rather than waiting to be told it now belongs to us.

The era in which clinical safety and cybersecurity could be cleanly separated is over. The patients we are accountable to do not care which committee owns the risk. They care that the system works, that it is there when they need it, and that no one comes to harm because a server was left exposed or a weekend project went into use without anyone noticing.

If you would like to think this through for your own organisation, we are always happy to have the conversation.

Dr Keith Grimes is the founder and CEO of Curistica.