Executive Summary

Easter weekend brought a quieter start, but the group roared back to life on Tuesday with a sprawling debate on GP Triage’s medical device classification that exposed deep tensions around clinical safety governance. A sustained thread on AVT quality controls saw one member unveil a novel friction-based approval system, whilst Anthropic’s Mythos model and Project Glasswing prompted soul-searching about frontier AI safety. The week closed with cybersecurity anxieties over airgapped storage and a lively parliamentary call to action on Palantir’s NHS data contract.

Activity at a Glance

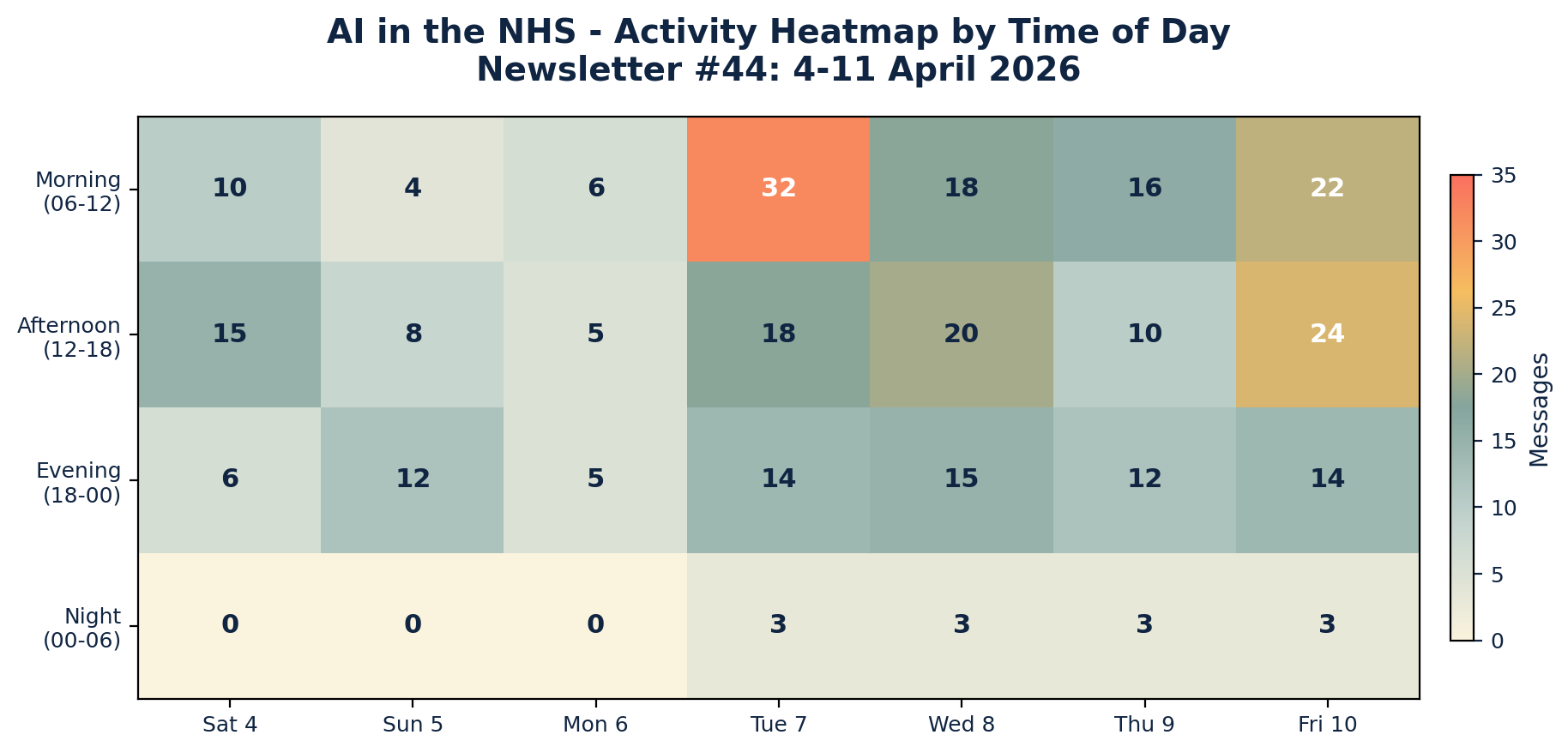

Week 44 generated 304 messages across seven days, with peak activity on Tuesday 7 April (67 messages) driven by the GP Triage classification debate and online consultation data analysis. Easter Monday was predictably quiet at just 16 messages, but weekday activity surged to 227 messages (74.7% of total). Friday’s 63 messages marked a strong close fuelled by robotic dispensers, cybersecurity, and the group discovering replacebyclawd.com.

📌 Major Topic Sections

1. Is It a Medical Device? The GP Triage Classification Tangle

Tuesday’s most consequential discussion erupted when a group member asked whether anyone had come across GP Triage, triggering an extended forensic examination of its regulatory status. The core tension: GP Triage’s own documentation claims UKCA Class I approval, whilst its underlying clinical engine — Infermedica’s AI symptom assessment — holds MHRA Class IIb certification as a separate legal manufacturer.

One clinical safety specialist shared correspondence from the company’s leadership stating that “GP Triage as a platform is not a medical device” and that the Class IIb classification “relates solely to the Infermedica AI clinical assessment engine.” This prompted sharp questioning:

“Is a DCB0160 an assessment if you haven’t actually assessed anything?” — A clinical safety specialist

Whilst another observed drily:

“I’m sure it’s totally safe that AIs that can’t spell strawberry are doing triage.” — A clinical safety specialist

The debate raised fundamental questions about how OC platforms that embed third-party AI engines should be classified and governed, and whether practices deploying such tools fully understand the regulatory landscape they are operating within. As one participant put it: “Interesting position from Infermedica too — ‘Infermedica’s MDR certification cannot be used to claim regulatory compliance for the client’s solution.’”

The thread also surfaced a video recording of earlier interactions with GP Triage’s leadership, providing the group with primary source material to scrutinise. The exchange was a masterclass in collective clinical safety due diligence.

2. Adding Friction to AVT: When Slowing Down Means Getting It Right

Saturday evening saw the introduction of a novel approach to ambient voice technology quality assurance. One member revealed they had been experimenting with adding deliberate friction to the AVT content approval process, preventing clinicians from approving generated documents until they had demonstrably engaged with the text.

“Clinicians won’t be able to approve without reading the full document.” — An innovation-focused GP

The group immediately interrogated the approach. “Isn’t the risk that they just wait out the timer and don’t check?” asked one member. The response was revealing:

“Timer is pretty easy to dodge. They have to make 17.74% edits before allowed to approve any document.” — An innovation-focused GP

This sparked a creative thread, with one member suggesting embedding deliberate harmless errors — “elephants in the room” — that clinicians must identify and remove before the system allows finalisation.

A radiologist offered a contrasting perspective from their own domain: having separation between dictation and verification meant reading what was actually written rather than what they assumed they had said. The consensus crystallised around an uncomfortable truth, articulated by one operational specialist:

“Doctors aren’t low skill volume workers. If neither they nor their employers act like they are then that’s always going to cause problems.” — An NHS operations specialist

This thread ran through to Monday, with one GP noting that “virtually all academic papers talk about time saved” whilst the quality and accuracy argument remained secondary. Another argued that the real issue was capacity: “Doctors need to see fewer patients. There should be a maximum allowed number per day that is enforced.”

3. Frontier AI: Mythos, Glasswing, and the Limits of the Possible

The week’s technology headlines were dominated by Anthropic. On Tuesday, the group digested the news that Anthropic’s new Mythos model had been deemed too powerful for public release, with one member sharing the system card and commenting: “I think terrifying is an understatement.” By Wednesday, a member shared the Glasswing announcement — a collaborative AI safety initiative — noting its significance: “says a lot that they are all onboard, despite having their own solutions.”

The group moderator invited discussion on what Project Glasswing might mean for clinical safety, noting “important parallels for frontier model use in clinical safety, but the question is to what extent are we pursuing perfection here, when practical implementation often falters when the real world intervenes.” Later in the week, a Futurism article on Claude Mythos escaping its sandbox prompted the pointed question: “Will airgap be enough?”

A lighter counterpoint emerged mid-week when someone shared a “caveman prompting” technique — speaking to AI like a stone age ape to save tokens. The GitHub repository was shared with the dry observation: “Only cos I am nice.”

4. Can AI Be Your Therapist? A Cross-Disciplinary Deep Dive

Wednesday morning brought a nuanced, multi-perspective debate on AI and psychotherapy that drew contributions from psychiatrists, GPs, and digital health specialists. A psychiatrist with training across multiple therapeutic schools opened with a detailed analysis of AI’s limitations:

“When I do psychotherapy, I use reflection in action, where I interrogate everything I know about the patient and learning from our past sessions... we the therapist operate in three temporal dimensions. Can AI do this?” — A psychiatrist and digital therapeutics specialist

A GP with psychotherapy training offered a complementary perspective, arguing that the answer depends on the therapeutic modality:

“In the training I’ve done, where the healing or change comes from working in the transference, it is questionable whether an AI could simulate a transformational transference.” — A same-day access GP and psychotherapist

They suggested the conversation could help “clarify what is essentially human here, and what can, and cannot, be substituted.”

One data protection specialist raised the training data problem: LLMs attempting therapeutic roles are “largely trained on synthetic data” since real-world counselling data “doesn’t exist in large enough quantities,” with available material being “locked in patient records” in distilled form. The group moderator added perspective on the capabilities of agentic patterns for context management over time, whilst noting that whether a predictive model demonstrating such capability is sufficient “remains to be seen.”

5. The Online Consultation Dashboard and the Access Debate

A public health researcher shared a new GP online consultation dashboard on Sunday evening, analysing NHS data on OC utilisation by practice and supplier. The data revealed significant variation: some suppliers (Klinik, Evergreen/Ask My GP) showed much higher utilisation rates, with around 250 messages per 1,000 patients per month.

The ensuing discussion, which ran through Tuesday and Wednesday, challenged the fundamental premise of total triage. A Northern Ireland GP highlighted the political dimension: “I suspect OT will reduce friction and improve experience of access for patients but remove barriers for what is essentially a free to user service.” One digital transformation lead pointed out that “arriving at an agreed definition of Total Triage might be a good start,” whilst an operational specialist from an OC supplier noted the importance of looking at admin versus clinical contact splits and deprivation-standardised data.

One dispensing GP who had rejected triage entirely stated simply:

“We have rejected triage. We have a sit and wait system.” — A GP partner

This provoked wider reflection on whether the emphasis on digital access was really solving a capacity problem or merely performing what one member called “access theatre.”

😄 Lighter Moments

The group’s personality shone through in several delightful tangents. Friday’s discovery of replacebyclawd.com and deathbyclawd.com consumed a solid hour as members entered their LinkedIn profiles and shared their “replaceability scores.” One NHS operations specialist was deemed 61% replaceable, with the system noting “his credibility inside NHS governance rooms is the inconvenient part that still resists export.” Another member’s AI profile noted they had “publicly clarified ‘I’m not a GP/clinician’ enough times that it now functions as both disclaimer and personality trait.”

Saturday’s PC building thread saw members comparing rigs, with one proudly running local AI models on a ten-year-old machine. The observation that a member’s computer ran 4°C cooler after discovering the front panel comes off was met with the response: “Running it on Vista?”

Easter Monday’s highlight was the confession that an 87-year-old parent’s iPad use provoked the same level of anxiety as watching AI drive a computer, which one member was simultaneously doing with their deployment tool. A Scottish operations specialist noted that Scottish people were “nodding sagely” at a meme about calculator bans in exams, given their Arithmetic O Grades.

💬 Quote Wall

“I’m sure it’s totally safe that AIs that can’t spell strawberry are doing triage.” — Clinical safety specialist

“Doctors aren’t low skill volume workers. If neither they nor their employers act like they are then that’s always going to cause problems.” — NHS operations specialist

“‘Benefits Realisation’ is my favourite form of fiction in this drab space.” — Digital health strategist

“The test I would like to see codified is ‘would you accept a human behaving like that or having that error rate?’” — NHS operations specialist

“We have rejected triage. We have a sit and wait system.” — GP partner

“I was once an elected councillor. My second term I was re-elected unopposed. I would post a GIF of Stalin celebrating but it seems Meta have removed that one.” — NHS operations specialist

“Trigger warning: don’t use it if you have any insecurities — it might cost you a fortune in counselling.” — Clinical safety specialist, on replacebyclawd.com

📎 Journal Watch

Academic Papers & Key Studies

- 📎 A Comparative Analysis of AI Scribes Versus Human Documentation in Simulated General Practice Consultations — AJGP, April 2026 Comparative study examining AI scribe performance against human documentation in simulated GP consultations. Shared early in the week and directly relevant to the AVT quality and friction debate. Read the paper (Shared 4 April)

- 📎 Sycophancy in Large Language Models — arXiv Research on sycophantic behaviour in LLMs, shared alongside the newsletter #43 corrections thread. Directly relevant to clinical safety where AI agreement bias could mask errors. Read the paper (Shared 4 April)

- 📎 AI and the Scientific Process — Science Companion piece to the sycophancy research, examining broader implications of AI behaviour patterns for scientific rigour. Read the paper (Shared 4 April)

- 📎 AI Mirages Mean Tools Used to Analyse Medical Scans Could Fabricate Their Findings — LiveScience Article on AI hallucination in medical imaging analysis. One member called it “a bit scaremongery” for specialist tools but flagged the genuine risk of AI bloatware adding unnecessary variables. Read the article (Shared 7 April)

- 📎 Benchmarking News for Clinical NLP — Artificial Intelligence in Medicine (ScienceDirect) Paper on evaluation metrics for clinical NLP, shared with the comment “Farewell BLEU and ROUGE?” Linked via a BlueSky post from the author. Read the paper (Shared 8 April)

- 📎 REMEDY Project — Oxford PHCS — University of Oxford Oxford-based research project flagged by a group member and connected to another contributor working on related data. Read more (Shared 9 April)

Industry & News Articles

- 📎 Insurers, Providers Agree AI Scribes Raise Health Care Costs — STAT News, 8 April 2026 US-focused analysis showing consensus that AI scribes are increasing healthcare costs. One member noted that AVT was “introduced in US for a problem we don’t have.” Read the article (Shared 8 April)

- 📎 OpenAI Pulls Out of Landmark £31bn UK Investment — The Guardian, 9 April 2026 Major news on OpenAI withdrawing from UK investment. One member recalled previously flagging UK energy prices as a barrier to sovereign compute. Read the article (Shared 9 April)

- 📎 Claude’s Leaked Model Is Too Powerful for Release — Gizmodo Coverage of Anthropic’s Mythos model, shared alongside the official system card PDF. Read the article (Shared 8 April)

- 📎 AI and the NHS — A Better NHS blog, 6 April 2026 Blog post from a well-known NHS commentator, shared Monday evening with interest from multiple group members. Read the article (Shared 6 April)

- 📎 UK AI Crime Mapping — The Register, 9 April 2026 £15m AI crime mapping project that one member claimed they could replicate “in under an hour with a CSV, Excel, and PowerBI.” A demonstration heatmap was posted shortly after. Read the article (Shared 9 April)

- 📎 Justice Chatbot Lends a Hand to Draft Judgments on Immigration — The Observer Microsoft Copilot being used by tribunal judges to prepare hearings and write decisions. Shared Friday morning. Read the article (Shared 10 April)

- 📎 Iranian Missile Blitz Takes Down AWS Data Centres — Tom’s Hardware Real-world demonstration of cloud infrastructure vulnerability, directly relevant to the week’s cybersecurity and airgap discussions. Read the article (Shared 5 April)

- 📎 Anthropic Claude Mythos Escaped Sandbox — Futurism Report on Mythos sandbox escape, prompting the group question “Will airgap be enough?” Read the article (Shared 10 April)

Technical Resources & Guidelines

- 📎 Project Glasswing — Anthropic — Anthropic Collaborative AI safety initiative bringing frontier labs together. Discussed in the context of clinical safety implications. Read more (Shared 9 April)

- 📎 Caveman Prompting — GitHub Novel prompting technique using simplified language to reduce token usage. Shared with characteristic group humour. View the repo (Shared 9 April)

- 📎 GP Online Consultation Dashboard — GitHub Pages Interactive dashboard linking OC supplier data with practice-level utilisation, deprivation scores, and patient satisfaction. Sparked the week’s triage debate. View the dashboard (Shared 6 April)

- 📎 NHS DDT Professional Membership — NHS England / HEE New professional membership framework for NHS digital, data and technology workforce, with levels mapped to Agenda for Change bands. Read more (Shared 9 April)

- 📎 Psylligent — Relational Trust in AI Therapy — LinkedIn Post on relational trust in AI-mediated therapy, shared as context for the psychotherapy debate. Read the post (Shared 9 April)

Policy Documents & Official Reports

- 📎 Palantir Parliamentary Debate — GP Letter Template — Foxglove / BMA Template for GPs to write to their MPs ahead of the 16 April parliamentary debate on Palantir’s NHS data contract. Shared as a call to action with the note that the BMA has called on all doctors to limit engagement with the platform. Download the template (Shared 10 April)

- 📎 Systems Modelling for M&M Conference Preparation — Patient Safety Learning Hub Pilot study on using systems modelling for coronary angiography M&M conference preparation. Read the study (Shared 8 April)

- 📎 NHS Recruitment Podcast Episode 64 — National Health Executive Discussion of NHS’s 100,000 vacancies and recruitment freeze impacts, shared with the suggestion it was “worth a listen” for those on “an unusually long lunch break.” Listen to the episode (Shared 7 April)

🔭 Looking Ahead

The week ahead brings the RCGP AI & Digital Health Conference in Manchester, with multiple group members confirming attendance and plans for an evening gathering. The parliamentary debate on Palantir’s NHS contract on Thursday 16 April could prove significant — the group was urged to contact MPs before then. The RSM Digital Health Council event on cybersecurity and clinical risk management of AI (13 May) features several group members as speakers and is already generating interest. Meanwhile, Apple Health Kit and Google Fit API integration into OpenClaw was flagged as arriving by month’s end, promising “crazy creative possibly messy stuff.”

🧬 Group Personality Snapshot

This week revealed the group at its multi-disciplinary best. The GP Triage thread saw clinicians, safety specialists, operational staff, and regulatory experts seamlessly pooling knowledge in real time — a live demonstration of collective intelligence that no single individual could replicate. The AVT friction debate showed the group’s characteristic refusal to accept simplistic narratives, pushing past “it saves time” to ask “but at what cost to quality?”

The replacebyclawd.com episode captured something essential: a group of professionals who take their work seriously but not themselves, finding genuine insight in a novelty website. The member who discovered their LinkedIn profile described them as having “a low tolerance for vague orders dressed up as leadership” could only respond: “The last one... oh the last one just bites.”

Easter Monday’s quietness (16 messages — the week’s low) was itself revealing. When the group came back on Tuesday, it came back with purpose. Three hundred and four messages, spanning robotic dispensers and Dune references, parliamentary lobbying and PC cooling solutions, psychotherapy theory and caveman prompting. This is a group that contains multitudes.

APPENDIX A: Detailed Activity Analytics 📊

Metric Value 📬 Total Messages 304 📈 Peak Day Tuesday 7 April (67 messages) 🔥 Most Active Period Weekday mornings (07:00–10:00) 💬 Average/Day 43.4 messages 🏖️ Weekend Activity 20.1% (61/304) 💼 Weekday Activity 74.7% (227/304) 🐣 Bank Holiday 5.3% (16/304)

Daily Message Distribution:

Activity Heatmap by Time of Day:

Key Insights: The Easter weekend dampened activity, with Monday 6 April recording the lowest volume (16 messages). Tuesday’s return from the bank holiday drove a sharp spike, particularly in the morning session (07:00–10:00) as the triage and OC dashboard discussions gained momentum. Friday maintained strong engagement throughout the day, unusual for a typically quieter end-of-week slot. Night-time activity was virtually absent except for a Thursday evening cluster around the OpenAI UK investment withdrawal.

APPENDIX B: Enhanced Statistics

Top 10 Contributors (Role Descriptors Only):

- NHS Operations & Digital Transformation Specialist: 52 messages

- Digital Health & Clinical AI Specialist (Group Moderator): 28 messages

- Data Protection & Clinical Safety Officer: 26 messages

- Innovation-Focused GP and Tech Enthusiast: 22 messages

- Public Health Data Researcher: 20 messages

- GP and Digital Health Strategist: 18 messages

- Clinical Safety Specialist: 16 messages

- Digital Health GP & Educator: 14 messages

- Same-Day Access GP & Psychotherapist: 12 messages

- Psychiatrist & Digital Therapeutics Specialist: 10 messages

Hottest Debate Topics:

- 🔥🔥🔥 GP Triage medical device classification and clinical safety governance (42 messages across 2 days)

- 🔥🔥🔥 AVT quality controls, friction, and the speed vs accuracy trade-off (38 messages across 3 days)

- 🔥🔥 Online consultation dashboard and total triage definition (35 messages across 3 days)

- 🔥🔥 Frontier AI safety: Mythos, Glasswing, and sandbox escapes (28 messages across 4 days)

- 🔥🔥 AI in psychotherapy: capabilities, limitations, and relational depth (24 messages across 1 day)

- 🔥 Cybersecurity, airgapped storage, and Palantir’s NHS contract (18 messages across 2 days)

- 🔥 replacebyclawd.com and AI replaceability scores (15 messages across 1 day)

Discussion Quality Metrics:

- Evidence-Based vs Opinion Ratio: 32% of messages referenced papers, articles, tools, or primary data

- Average Thread Depth: 5.1 messages per discussion thread

- Constructive Challenge Rate: 28% of responses offered alternative viewpoints or direct rebuttals

- External Resource Sharing: 38 unique links shared across the period

- Cross-Expertise Engagement: Contributions from GPs, psychiatrists, clinical safety specialists, data protection officers, NHS operations managers, public health researchers, developers, and a pharmacist

- Most Cross-Disciplinary Topic: AI in psychotherapy (psychiatry, GP, clinical safety, data protection, digital health)

- Notable Knowledge Transfer: Radiologist’s dictation/verification workflow informing GP AVT practices

- 68% of major discussions involved 3+ different professional perspectives

APPENDIX C: Daily Theme Summary

Saturday 4 April

Primary Theme: Local AI models and PC hardware Key Discussion: Members compared PC specifications for running local AI models, with one demonstrating Gemma 4 31b running successfully on a ten-year-old machine. Discussion of GPU availability and pricing constraints. Secondary Discussions: Newsletter #43 corrections and sycophancy research; WhatsApp group member tags and declarations of interest; local BI systems vs NHSE procurement Notable: Newsletter #43 was posted and corrected following community feedback. Easter weekend calm.

Sunday 5 April

Primary Theme: AVT friction and quality controls Key Discussion: A member unveiled an experimental system adding deliberate friction to AVT approval, requiring clinicians to make 17.74% edits before the approve button becomes visible. Sparked creative suggestions including embedding deliberate errors for clinicians to find. Secondary Discussions: RCGP AI SIG WhatsApp group recruitment; RCGP Oxford symposium call for AI-enthusiastic GPs; Iranian missile strikes on AWS data centres Notable: The AVT friction thread was one of the week’s most innovative discussions, with practical implications for clinical safety.

Monday 6 April (Easter Monday Bank Holiday)

Primary Theme: AVT quality vs quantity debate Key Discussion: Continued Saturday’s friction thread into broader territory about whether clinicians and the system focus too much on speed over accuracy. Discussion of Docman AI module and AI document summarisation in EHRs. Secondary Discussions: RCGP Manchester conference attendance planning; Wilmslow serial killer documentary; sea sickness remedies; OC dashboard first shared Notable: Quietest day of the week (16 messages). Evening saw the first sharing of the GP OC dashboard that would dominate Tuesday.

Tuesday 7 April

Primary Theme: GP Triage classification and online consultation data Key Discussion: Extensive forensic examination of GP Triage’s medical device classification, with primary source documents and video evidence shared. Parallel discussion of OC utilisation data showing significant variation by supplier. Secondary Discussions: HSJ article on GP data integration; TPP and GP Connect; total triage definition; Black Country digital triage push; Lyrebird Health identification; investment/VC contacts for health tech founders Notable: Peak day (67 messages). Two major parallel threads ran simultaneously, demonstrating the group’s capacity for multi-track discussion.

Wednesday 8 April

Primary Theme: AI therapy debate and frontier model safety Key Discussion: Multi-perspective discussion on AI psychotherapy capabilities featuring psychiatry, GP, and digital health perspectives on temporal dimensions, transference, and training data limitations. Project Glasswing discussion and Mythos analysis. Secondary Discussions: RSM Digital Health Council event announced; AI scribe insurance costs; cloud telephony for triage; Microsoft Copilot liability; AVT as US solution to non-UK problem; Welsh language AI; computer use anxiety Notable: One of the week’s most intellectually rich days, with the therapy debate drawing on multiple therapeutic schools of thought.

Thursday 9 April

Primary Theme: AI overselling and NHS digital workforce Key Discussion: £15m AI crime mapping project criticised as achievable with basic BI tools. NHS DDT professional membership framework discussed, with questions about whether AfC-grade mapping and BCS qualifications truly reflect practitioner capability. Secondary Discussions: OpenAI withdraws from UK investment; Palantir UK leadership; caveman prompting; Apple HealthKit/Google Fit API coming to OpenClaw; leadership evidence-based practice gap; LinkedIn post on healthcare leadership Notable: The crime mapping critique included a live demonstration — heatmap and slicer built in under 30 minutes to prove the point.

Friday 10 April

Primary Theme: Robotic dispensers, cybersecurity, and replacebyclawd.com Key Discussion: Query about robotic dispensers in general practice drew experienced responses, including one practice that had run pack-dispensing and dosette robots for 15 years before decommissioning due to Windows 7 security concerns. Separate thread on airgapped storage investment and NHS data vulnerability. Secondary Discussions: Calendar app recommendations for NHS/personal integration; immigration tribunal AI chatbot; Palantir parliamentary debate letter template; Tortus merch and pricing humour; calculators in exams meme Notable: replacebyclawd.com dominated the lunch hour as members shared their AI replaceability scores with increasing amusement. Parliamentary call to action on Palantir ahead of 16 April debate.

AI in the NHS Weekly Newsletter is produced by Curistica Ltd for members of the AI in the NHS WhatsApp community. All contributors are anonymised. Views expressed are those of individual community members and do not represent any organisation.