Executive Summary

This week’s 377 messages traversed a remarkable breadth of territory, from the strategic implications of EU product liability for AI, through a vigorous community debate on unregulated diagnostic tools, to a deeply human conversation about whether technology can ever replicate the irreplaceable value of GP continuity. The week’s standout exchange saw members passionately defending regulatory compliance against arguments for “capability first” approaches to an unregistered AI diagnostic platform, reaffirming the group’s foundational principle that safety is not an afterthought. Friday’s explosion of activity (149 messages) was fuelled by a spirited results management discussion that laid bare the gap between what existing systems can already do and the AI-driven future we keep reaching for. Across the week, members shared clinical safety decommissioning wisdom, debated cognitive debt in AI-augmented practice, and pondered whether anyone really needs to come back from holiday when AI doctors are apparently a click away.

Activity at a Glance

Issue #37 generated 377 messages from 39 contributors across 8 days, with a dramatic peak on Friday 20 February (149 messages, 39.5% of all traffic). Weekday activity dominated at 76.1% (287 messages), with Friday alone accounting for more than half of all weekday messages. Morning and afternoon periods saw the heaviest engagement, with Friday afternoon’s 76 messages representing the single most active period of the week.

🔥 Major Topics

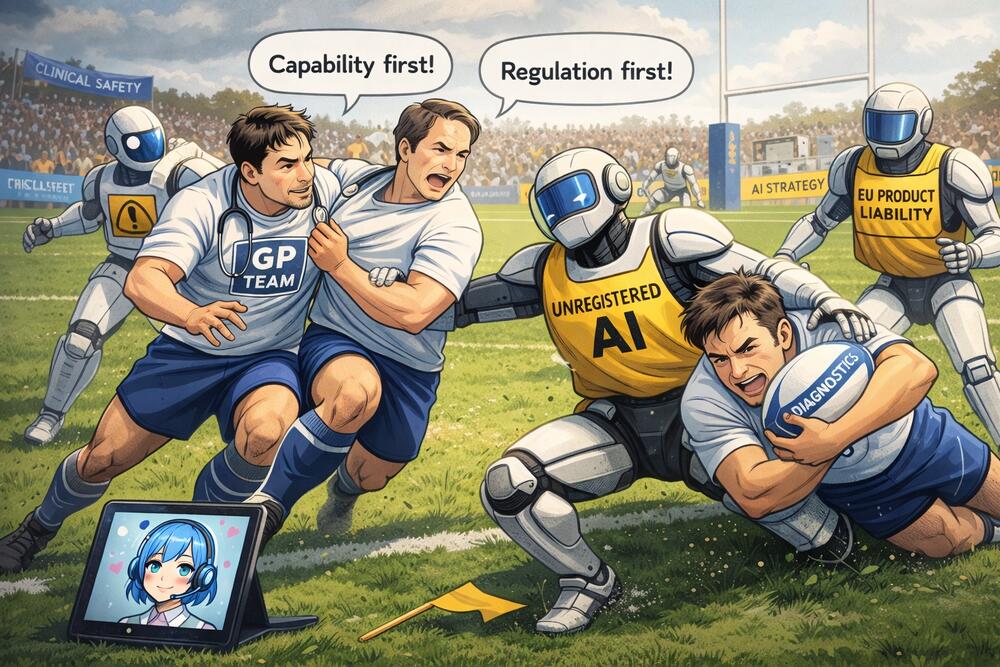

1. The Medome Debate: Capability vs Compliance

Friday 20 February (with roots in earlier discussions)

The week’s most heated exchange erupted when a health technology strategist shared a link to Medome, an AI diagnostic platform operating without UK regulatory approval, and invited the group to consider its capabilities independently of compliance concerns.

The response was swift and unequivocal. A health data entrepreneur fired back firmly: “The product can’t be used in the UK, this is an NHS group.” A GP and digital health innovator noted the tool had previously been discussed, with its founder conceding it would qualify as a medical device but had not undertaken the necessary regulatory steps. A clinical informatician framed the risk clearly: if any clinician accepts output from an unregistered tool as valid, they assume full medical liability for any resulting error.

The strategist maintained they were interested purely in AI capability and differential diagnosis performance, arguing that “these tools are coming, like it or not” and that consumers would not differentiate between regulated and unregulated products. The community’s consensus, however, held firm. A GP and clinical safety officer observed that “rules and governance are integral part of the product and value proposition” and that healthcare brings essentially limitless liability.

The exchange crystallised a recurring group tension: acknowledging that frontier AI diagnostic capability is genuinely impressive, while insisting that capability without safety assurance is not a product worth discussing in an NHS context. One member suggested a DHSC national campaign advising patients not to “give your health data to unapproved clankers”.

2. Results Management: Before You Reach for AI, Use What You’ve Got

Friday 20 February (building on Tuesday-Thursday threads)

A Pulse Today article about a patient death linked to inadequate laboratory result flagging ignited one of the week’s richest and most practically grounded discussions. An innovation-focused GP shared the article, and a GP with informatics expertise responded with a passionate call to fix fundamental systems before layering AI on top.

The informatics-focused GP delivered a masterclass in existing EHR capability, sharing screenshots of rules-based automation that could handle common filing errors with “zero AI cost” and just two clicks. They argued forcefully that the NHS is “failing to use what we’ve got and diverting money away from front line patient care.”

A GP with results management expertise in Northern Ireland detailed the real-world problems: HbA1c values never highlighted as abnormal in EMIS until recently, urine cultures marked normal without checking for haematuria, and the B12 reference range update creating potentially hundreds of thousands of pounds in unnecessary prescriptions.

Several members acknowledged a potential role for AI in synthesising patient conditions, medications, and previous results to contextualise new findings, but the overwhelming consensus was that existing rules-based automation remains woefully underused.

3. Clinical Cognitive Debt: What Happens When AI Does the Thinking?

Sunday 15 February, continuing through the week

A digital health specialist raised the concept of “cognitive debt” from a Simon Willison article, sparking a nuanced conversation about what clinicians might lose when AI handles documentation, reasoning, and recall.

A clinical safety specialist echoed the concern: “I really do worry that a mix of junior staff being cut, and the remaining ones’ reliance on tech that removes learnt reasoning will come back to bite in a decade when they themselves become seniors.”

A GP trainer offered a counterpoint from lived experience: freed from documentation burden, they were pushing trainees harder in consultations and patients were receiving richer interactions.

4. Continuity of Care: Can AI Fill the Gap?

Friday 20 - Saturday 21 February

A community member shared their own Substack writing on patient arcs, continuity, and fragmented care experiences, asking whether AI could help clinicians “hold the thread” of a patient’s story. This triggered one of the week’s most profound exchanges.

A landmark Norwegian study showing 25% reduction in all-cause mortality from 15+ years of GP continuity was shared and rigorously scrutinised. The discussion deepened on Saturday morning when an innovation-focused GP argued that continuity cannot be reduced to “a mathematical data input”, sharing clinical vignettes where human observation led to diagnoses that no AI system could replicate.

A digital health leader offered the week’s most quotable synthesis: “the utility of AI in healthcare is about raising the floor, not raising the bar.”

5. AI Liability, Decommissioning, and the Regulatory Landscape

Saturday 14 - Wednesday 18 February

The week opened with significant discussion of AI liability in healthcare, flagged as “this year’s hot topic” by a medical physicist. A digital health specialist detailed the revised EU Product Liability Directive (2024/2853), under which AI systems are now classified as “products” with strict liability and reversed burden of proof from December 2026.

Mid-week, a clinical safety specialist sought practical guidance on DCB0160 decommissioning processes, prompting a collaborative knowledge-sharing session. A medical physicist enriched the conversation with historical context about NHS Connecting for Health’s PACS contracts, where data migration was left out of national contracts, an experience that “killed the case for imaging data in the cloud for ten years.”

😄 Lighter Moments

The week had its share of memorable asides. A practice manager’s blunt late-night assessment of GP compliance awareness prompted a colleague’s early morning verdict: “Woke up today and chose violence.”

When a GP platform announced its name in an unfortunate proximity to a RAG tool for browsing the Epstein Files, the platform’s creator responded with mock alarm: “I saw my name and then the word Epstein.”

The week’s hardware arms race saw members casually comparing setups: Mac Studios with 256GB RAM, dual NVIDIA RTX 5800 cards with 96GB VRAM, and one specialist running three machines almost continuously on Claude.

📝 Quote Wall

“I really do worry that a mix of junior staff being cut, and the remaining ones’ reliance on tech that removes learnt reasoning will come back to bite in a decade.” — Clinical safety specialist, on cognitive debt

“The level of ‘I don’t know anything about compliance but we want to play with this anyway’ out in GP land is huge.” — Practice manager

“The utility of AI in healthcare is about raising the floor, not raising the bar.” — Digital health leader

“Do it right, there is no excuse for not following the basic legal requirements.” — Health data entrepreneur

“We’re failing to use what we’ve got and diverting money away from front line patient care.” — GP with informatics expertise

“Killed the case for imaging data in the cloud for ten years.” — Medical physicist, on the PACS data migration debacle

“Better having to restrain wild horses than raising the dead.” — Paraphrased community wisdom

📎 Journal Watch

Academic Papers & Key Studies

📎 AI Liability in Healthcare: A Systematic Review — Frontiers in Medicine Recommended as the definitive entry point to healthcare AI liability literature. Full paper

📎 Continuity of Care and All-Cause Mortality — Sandvik et al. (BJGP via PMC) Norwegian study of 4.55 million patients showing 25% reduction in all-cause mortality from 15+ years of GP continuity. Full paper

📎 Continuity of Care and Emergency Admissions — Barker et al. (2017, BMJ) UK study on GP continuity and lower emergency department attendance. Full paper

📎 AI Regulation in Healthcare — BMJ (Gordon et al.) Paper on AI regulation. Full paper

📎 Improving LLM Performance Through Structural Recognition — arXiv Playing to existing strengths yields results. Full paper

Industry & News Articles

📎 NHSE Moving Away from Electronic Patient Records — Digital Health Article

📎 NHSE Results Flagging Review After Patient Death — Pulse Today Article

📎 Cognitive Debt in AI Agent Use — Simon Willison Article

📎 AI and the End of Friction as a Policy Lever — Tom Loosemore Article

📎 Tandem Health Achieves MDR Class IIa Certification Article

📎 GRAIL Cancer Screening Trial Results — Reuters Article

📎 France Ditches Zoom/Teams for Homegrown System — ABC News Article

Technical Resources & Tools

📎 The Ralph Wiggum Loop/Technique — AI agent feedback loop methodology. Resource

📎 Claude Code Plugins — Anthropic Repository

📎 Vigil MHRA Reporting Assistant — Curistica Tool

Policy & Governance Documents

📎 NHS App AI Triage Roadmap — NHS Digital Document

📎 FDP Product Screening Questionnaire — NHSE Document

📎 SystmOne GP Excellence Awards — TPP Details

🔮 Looking Ahead

The continuity of care debate shows no signs of resolution and will likely evolve as members explore whether AI can meaningfully bridge the gap between fragmented services and the deep patient knowledge that comes from longitudinal relationships.

Results management emerged as a rich vein for practical improvement, with several members expressing interest in building AI-augmented tools. The NHS Hack Day in Cardiff next month may see some of these ideas take shape.

The Medome debate will recur as more unregulated AI health tools enter the market. The EU Product Liability Directive deadline (December 2026) looms as a critical inflection point.

🧬 Group Personality Snapshot

Issue #37 captures a community that has matured into something quite distinctive: a space where a practice manager can deliver a devastating assessment of GP compliance awareness at 11pm and be told they “chose violence” by 5:37am, where members casually compare home server setups worth the price of a small car, and where someone accidentally posting a restaurant booking is met with dignified silence rather than mockery. The Medome exchange demonstrated how the group’s regulatory backbone has become instinctive rather than performative. The continuity of care discussion, meanwhile, revealed a community of practitioners who genuinely care about the human dimensions of medicine even as they build the digital future. This is a group that can discuss EU product liability directives and Pizza Express in Woking in the same breath, and somehow both conversations make sense.

APPENDIX A: Detailed Activity Analytics 📊

Dashboard

Total Messages: 377 | Active Contributors: 39 | Peak Day: Friday 20 Feb (149 messages) | Quietest Day: Saturday 21 Feb (14 messages) | Most Active Period: Friday Afternoon (76 messages) | Average/Day: 47.1 messages | Weekday Activity: 76.1% (287/377) | Weekend Activity: 23.9% (90/377) | URLs Shared: 56

Daily Message Distribution

[Chart image to be added via Webflow Designer]

Activity Heatmap

[Chart image to be added via Webflow Designer]

APPENDIX B: Enhanced Statistics

Top 10 Contributors (Role Descriptors Only)

- Digital Health & Clinical AI Specialist (Group Moderator): 61 messages

- Clinical Safety Specialist: 32 messages

- Health Technology Strategist: 24 messages

- Innovation-Focused GP: 22 messages

- GP and Clinical Safety Lead: 21 messages

- Clinical Safety Lead (Deployer): 18 messages

- Health Data Entrepreneur: 16 messages

- Digital Health Informatician: 14 messages

- Digital Health Academic (GP): 14 messages

- Medical Physicist & Regulatory Specialist: 13 messages

APPENDIX C: Daily Theme Summary

Saturday, 14 February

Primary Theme: AI Liability in Healthcare and EU Product Liability. The week opened with discussion of the revised EU Product Liability Directive and its implications for AI manufacturers. A medical physicist flagged AI liability as “this year’s hot topic.”

Sunday, 15 February

Primary Theme: Clinical Cognitive Debt and AI-Augmented Practice. The concept of cognitive debt from agentic AI use was introduced and applied to clinical settings. Group membership noted at 736 members.

Monday, 16 February

Primary Theme: NHS App AI Triage and Clinical Liability. NHS Digital’s AI-enabled triage roadmap prompted debate about who carries clinical liability when AI triage is delivered through the NHS App.

Tuesday, 17 February

Primary Theme: System Decommissioning and Data Governance. Historical lessons from NHS Connecting for Health’s PACS contracts. Palantir’s NHS spending drew sharp criticism.

Wednesday, 18 February

Primary Theme: Data Governance and the Federated Data Platform. NHSE publication on FDP DPIA screening prompted critique of centralising organisational data protection duties.

Thursday, 19 February

Primary Theme: AVT Consent and Patient Communication. Research paper on patient anxiety around AI scribes prompted discussion of consent processes and DCB0160 requirements.

Friday, 20 February

Primary Theme: Three converging debates: Medome capability vs compliance, results management, and continuity of care. 149 messages made this the most active day by a substantial margin.

Saturday, 21 February

Primary Theme: Continuity of Care Debate Conclusion. The week closed with a digital health leader’s synthesis that AI should focus on “raising the floor, not raising the bar.”

AI in the NHS Weekly Newsletter is produced by Curistica Ltd for members of the AI in the NHS WhatsApp community. All contributors are anonymised. Views expressed are those of individual community members and do not represent any organisation.