Executive Summary

This week's discussion opened with a striking demonstration of AI-generated fake medicine packaging and closed with an impassioned cardiologist's manifesto for what AI should actually be doing on hospital wards. In between, the group wrestled with NHS procurement dysfunction, the ethics of anthropomorphising clinical AI tools, an ICB's controversial use of Copilot to guide staff redundancies, the challenge of explaining medical device classification to real people, and NHS England's sudden decision to withdraw its public GitHub repositories over AI hacking fears. A consistently busy week, with regulatory debate, patient safety concerns, and sharp humour in equal measure.

Major Topic Sections

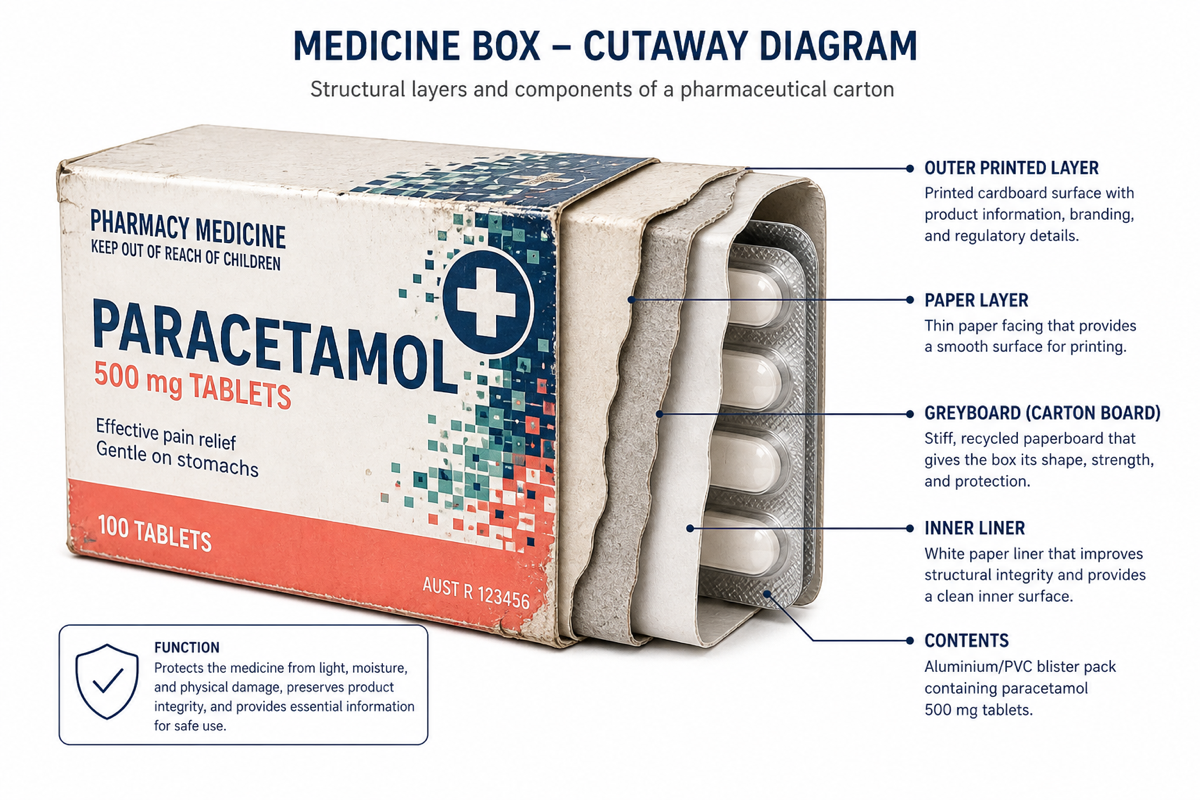

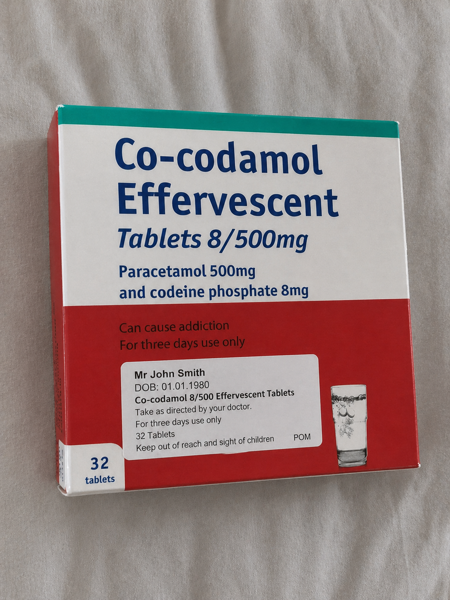

1. AI-Generated Fake Medicine Packaging: A New Patient Safety Hazard

Sunday morning's most consequential thread began when the newsletter curator shared images of AI-generated medicine packaging created using GPT5.5's improved image generation capabilities. The results were disturbingly convincing -- worn corners, realistic shadows, plausible label formatting -- and the group quickly identified a genuine clinical risk.

"Do any GPs accept screen shots of medicine labels as proof of medication? With AI imaging is this an issue?" -- a secondary care digital innovator

Several GPs confirmed that photographic proof of medication is commonly accepted in online consultations, particularly when secondary care correspondence is delayed. The implications were immediately apparent: patients seeking controlled or weight-loss medications could fabricate convincing evidence of existing prescriptions.

"There are precedents for this in the GLP1a space, where submission of proof of weight and body image is common" -- the newsletter curator

The group explored guardrails and mitigations. One digital health leader noted that Copilot refused to generate similar images, whilst another confirmed Perplexity also blocked the request. The curator highlighted that "what was concerning was that the app didn't have any guardrails for the creation of this image." Suggestions ranged from live video verification to NHS App camera-based authenticated image transfer, with one member noting that "we will probably have AI processing of the images received by patients."

A call was made to collaborate on a short paper documenting this hazard -- a tangible output that could inform both regulatory and clinical guidance.

2. NHS Procurement: The Vendor Accountability Crisis

Tuesday's longest thread dissected the dysfunctional dynamics of NHS technology procurement, drawing contributions from across the vendor, clinical, and commissioning spectrum.

The catalyst was a vendor's frustration with tender terms: "Currently working on a tender, where the NHS legals must be accepted as is or you are excluded. In the most basic sense this is madness... 'We impose terms, you must accept'. Does not lead to a healthy partnership."

"Underbid then underperform knowing that the NHS can't afford to replace you with the more expensive option. The heart of so many bad procurements I've seen" -- an NHS commissioning expert

The regulatory affairs specialist cut to the core: "Fundamental lack of ability (partly due to contracts) and interest to hold poor vendors to account. This is relatively easily fixed." Others noted that the people making procurement decisions are "often multiple steps removed from the people affected" and that "most people have a day job that is already at capacity."

The AI scribe market featured separately, with discussion of a LinkedIn post about a UK AI scribing contract. The group's vendor members pushed back against oversimplified narratives, noting that "it's not one contract" and that system integration, not just pricing, drives decisions. One GP vendor observed bluntly: "Virtually all scribe do the same, so value comes down to cost."

3. The Ethics of Anthropomorphising Clinical AI

A live demonstration of a GP receptionist AI tool prompted a pointed exchange spanning Tuesday and Wednesday. A commissioning expert reported: "I'm in a meeting now where they're demo-ing a GP receptionist AI tool. And the sales folk are calling it 'she'. No... and no.... really folks."

The tool in question, named with a human first name, drew criticism for reinforcing problematic design patterns. A GP innovation specialist added: "I hate it when AI tools take on the names of individuals."

The newsletter curator provided historical and regulatory context: "Standard accepted ethical practice is to adopt a non-gendered persona, and be clear about AI status." References were made to the EU AI Act, WHO 2021 guidance, and the 2019 UNESCO report -- the latter titled "I'd blush if I could," referencing prior Siri behaviours. The curator noted that "defaulting to female is considered poor practice, and the use of first names/realistic images is a recognised 'dark pattern'."

4. Copilot for Job Cuts: AI in NHS Restructuring

Thursday's most heated single story was the HSJ report that an ICB had used Microsoft Copilot to assist with staff consultation during restructuring.

"I can't think of many uses of AI that are worse than matching human to jobs in a staff consultation" -- an NHS commissioning expert

The same contributor noted that "the comments on that article are interesting seeing other ICBs using it as well... I wonder if that'll end up as part of any industrial tribunal at some point in the future." They pointedly highlighted Microsoft's own terms of use, which describe Copilot outputs as "entertainment."

A GP drew the comparison: "Quite a brutal performance management system you have for your clinicians there." Another member added: "Thinking on this, NHS JDs are probably about as accurate as political manifestos, and only slightly more reliable than CVs."

5. Medical Device Classification: Lost in Translation

Friday brought a relatable struggle: explaining medical device classification to a non-expert audience.

"I mentioned that the tool is Class III CE certified... she replied with 'does that mean it's dangerous and unsafe for patients?' and I struggled to recover it from there" -- an NHS commissioning expert

A vendor regulatory specialist offered a reframing: "I think that class 3 are better described as the most deeply assessed and scrutinised, with class 1 being 'we mark our own homework'." This sparked debate about whether classification correlates with risk at deployment.

The regulatory standards expert weighed in with the MHRA's own position: "The MHRA does not carry out assessments or approve medical devices placed on the UK market. It is not our role as a responsible regulator to endorse any devices."

A clinical safety specialist's dry observation closed the loop: "Because it shouldn't be Class 1 in the first place. Realised its been a while since I said that."

6. NHSE Withdraws GitHub Code Over AI Hacking Fears

Friday also brought a New Scientist report that NHS England was withdrawing publicly available source code from GitHub, citing AI hacking risks.

"NHS England is hurriedly withdrawing all the software it has written from public view because of the perceived risk of hacking from cutting-edge artificial intelligence. Security experts say the move is unnecessary and counterproductive" -- New Scientist, shared by a GP digital health leader

The group reacted with a mixture of bemusement and concern. One member quipped: "Quick rush to GitHub reveals NHSE animal crossing..." Another speculated it was "fallout from Mythos." A pragmatic voice noted: "If it's ever been on the open web it's already been scraped."

7. The Cardiologist's AI Manifesto

Saturday morning brought the week's most vivid single message -- a hospital cardiologist, fresh from a week on call, articulating exactly what AI should be doing to support acute medicine. The message described a reality of predicting deaths, managing cardiac arrests, arranging transfers, completing discharge summaries, counselling anxious relatives, and teaching juniors -- all whilst wanting to return to the procedural and research work that drew them to the specialty.

"Having been on call on the wards all week, I would love Claude Coworks to build a twin hospital, simulation ward of cardiac patients, predict the 3 deaths and 2 cardiac arrests... so I can get back to putting in pacemakers" -- a consultant cardiologist

A fellow clinician responded with succinct perspective: "One day it might happen, but when that happens, I would Not be worried about my job but the future of humanity." Another simply offered: "Sky net!"

Lighter Moments

The Half-Hour Tax: "I lose half an hour a day in this group" -- a pharmacist/digital health lead

Professional Identity Crisis: "How you're known in this group -- Well depends if we mean newsletter I have a new profession every week" -- a clinical safety specialist

The Stag Do Concern: "I had this concern. Was worried the Stag Do group chat would start calling out my credentials" -- a health tech leader

Netflix and Regulations: After one member shared a detailed device classification analysis done over dinner: "Was there nothing on netflix to watch?" -- a health tech entrepreneur

The Out-of-Office Champion: "My NHS email has been out of office for at least 5 years now. Haven't noticed any downsides yet" -- a GP innovation specialist

Retro Gaming Therapy: "I have the projector and sound system in our education room. Be a shame not to add in some retro consoles?" -- a practice manager, on staff morale

Quote Wall

"Underbid then underperform knowing that the NHS can't afford to replace you with the more expensive option" -- an NHS commissioning expert, on procurement dysfunction

"I'd love Claude Coworks to build a twin hospital, simulation ward of cardiac patients, predict the 3 deaths and 2 cardiac arrests" -- a consultant cardiologist, on what AI should actually do

"Because it shouldn't be Class 1 in the first place. Realised its been a while since I said that" -- a clinical safety specialist, on AI device classification

"The MHRA does not carry out assessments or approve medical devices placed on the UK market" -- a regulatory affairs expert, quoting the MHRA directly

"I'm in a meeting now where they're demo-ing a GP receptionist AI tool. And the sales folk are calling it 'she'. No... and no..." -- an NHS commissioning expert, on anthropomorphism

"My NHS email has been out of office for at least 5 years now. Haven't noticed any downsides yet" -- a GP innovation specialist

"NHS JDs are probably about as accurate as political manifestos, and only slightly more reliable than CVs" -- an NHS commissioning expert, on AI job matching

"It's just an admin tool" -- a voice tech specialist, on how AI is commonly downplayed

"I lose half an hour a day in this group" -- a pharmacist/digital health lead

"One day it might happen, but when that happens, I would Not be worried about my job but the future of humanity" -- a GP digital health leader, on fully autonomous clinical AI

Journal Watch

Clinical & Research

Mayo Clinic AI detects pancreatic cancer up to 3 years before diagnosis -- Mayo Clinic News Network Read more

AMBOSS NoHarm Study -- Amboss Read more

Rosalind Franklin and the DNA story -- Nature Read more

Policy & Regulation

First AI drug prescriber in US sparks concerns -- The Pharmacist Read more

NHSE withdraws GitHub code over AI hacking fears -- New Scientist Read more

ICB uses Copilot to assist with staff consultation -- HSJ Read more

Palantir workforce compliance tool used by Met Police -- The Guardian Read more

Trusts 'really poor' at IT rollouts, Mackey warns -- HSJ Read more

Industry & Tools

Superhuman email launches Claude integration -- Superhuman Read more

NHS-GPT: A 17bn a Year National Asset -- LinkedIn Read more

Anthropic spyware allegations -- That Privacy Guy Read more

The Atlantic: AI Bubble Revenue -- Anthropic analysis -- The Atlantic Read more

NVIDIA free model testing platform -- NVIDIA Read more

Trip Medical Database -- Trip Medical Read more

Heidi Evidence -- Clinical evidence feature -- Heidi Health Read more

NHS Digital Regulations Service -- NHS England Read more

Microsoft Copilot Terms of Use -- 'Entertainment' disclaimer -- Microsoft Read more

Looking Ahead

Upcoming Events

TEDx Cambridge University (27 June 2026) -- Speaker and sponsor slots still available; a group member is on the organising committee

World Pharma Summit -- Group members speaking on Day 1 alongside a London GP

Clinical Game Jam -- Highlighted via LinkedIn, with at least one member connecting Claude Code to Unity for game development

Hardian Health Summit at the BMA -- Sessions on regulation and safety topics; slides to be shared

Ongoing Threads

Open Evidence access loss -- The group is actively seeking alternatives, with Amboss, Trip Medical, Heidi Evidence, and Medwise.ai all discussed

AI-generated fake packaging -- A collaborative paper remains under discussion

Open Evidence allegations -- Cryptic hints at forthcoming allegations regarding 'key figures'; more expected shortly

AI adoption study -- Cambridge University study still collecting responses from practising UK clinicians

eRD reform -- The group's perennial frustration with electronic repeat dispensing shows no sign of resolution

Group Personality Snapshot

This week felt like the group finding its stride after the bank holiday lull. The vendor-commissioner tension in Tuesday's procurement thread was genuine but constructive -- people who disagree on process but share frustration with outcomes. The device classification thread on Friday was almost pedagogical, with multiple experts gently correcting each other's framings. Saturday's cardiologist manifesto injected raw clinical reality into a group that sometimes risks getting abstract. And throughout it all, the dry humour remained: a stag do WhatsApp group checking credentials, a five-year out-of-office, and the eternal question of whether Netflix might have been a better use of a Thursday evening. The community continues to function as an informal but surprisingly rigorous cross-sector advisory group -- part support network, part regulatory seminar, part comedy panel.

Appendix A: Enhanced Statistics

Total messages: 230+ across the period

Most active day: Thursday 30 April

Active days: 8/8 (full coverage)

Links shared: 25+

Images/videos shared: 10+

Appendix B: Daily Theme Summary

Saturday 25 April

The week opened with the previous issue's publication (Issue #46) and a discussion about Palantir's workforce compliance tool being used by the Met Police. A radiology AI commentary piece prompted debate about whether the sector is too focused on 'shoehorning blingy tech into a solution' rather than solving real problems.

Sunday 26 April

The day's dominant thread was AI-generated fake medicine packaging -- a safety hazard demonstration using GPT5.5 that generated realistic medication box images. GPs confirmed that photographic proof of medication is routinely accepted in online consultations. The eRD discussion emerged in parallel, with frustration that 'fancy AI' was being proposed for problems solvable with 'simple programmatic logic.' The first US AI drug prescriber story added international context.

Monday 27 April

A lighter day. Retro gaming videos and nostalgia kicked off the morning, with a practice manager making the case for consoles in the education room. The Clinical Game Jam was shared. Saudi Medtech opportunities were highlighted. The evening brought a substantive debate about an AI scribing contract.

Tuesday 28 April

Procurement dominated. A vendor's frustration with non-negotiable NHS contract terms sparked 30+ messages on underbidding, vendor accountability, and contract conduct. The anthropomorphism thread began with a live demo of a GP receptionist AI tool being gendered by its sales team. The Superhuman-Claude email integration was shared.

Wednesday 29 April

The anthropomorphism discussion continued with detailed ethical guidance on AI persona design, referencing the EU AI Act, WHO, and UNESCO frameworks. TEDx Cambridge University speaker recruitment was shared. Legal documentation prohibiting AI use was noted with irony, given that Copilot in NHS.net is automatic.

Thursday 30 April

The busiest day of the week. The ICB Copilot job-matching story broke and drew strong reactions. Microsoft's own 'entertainment' disclaimer was highlighted. Mayo Clinic's pancreatic cancer AI detection study was celebrated. Open Evidence access loss was mourned, with alternatives proposed. Cryptic hints about forthcoming allegations regarding Open Evidence 'key figures' added intrigue.

Friday 1 May

Medical device classification communication dominated -- a commissioning expert's attempt to explain Class III to their spouse became a springboard for debating whether classification reflects risk or scrutiny. The NHSE GitHub withdrawal story generated bemusement. The MHRA's non-endorsement position was quoted.

Saturday 2 May

The cardiologist's vivid ward-round AI manifesto opened the day. The Atlantic's piece on Anthropic revenue and the AI bubble was shared. NVIDIA's free model testing platform was highlighted. The newsletter period closed with the group contemplating both the transformative potential and existential implications of truly autonomous clinical AI.

This newsletter was generated from the AI in the NHS WhatsApp group conversations between 25 April and 2 May 2026. All contributors are anonymised by role descriptor only. Direct quotes have been verified against source messages. URLs have been checked against the source data.

AI in the NHS is a community of practice for clinicians, technologists, researchers, and policymakers exploring how artificial intelligence can responsibly benefit the National Health Service.

Newsletter #47 -- compiled by Curistica.